The bottleneck moved

A year ago, the hard part was getting an AI to write decent code. That's solved. Cursor, Claude Code, Codex: the models are good enough. Engineers aren't waiting for better autocomplete. They're waiting for agents that can actually do things.

But here's what happens in practice: an engineer kicks off an agent to fix a bug. The agent writes a patch. It looks reasonable. The engineer still has no idea if it works, because the agent couldn't access staging. It couldn't run the test suite against real data. It couldn't check Sentry to see whether the error pattern matched. It couldn't verify its own work.

The agent wrote code. It didn't do engineering.

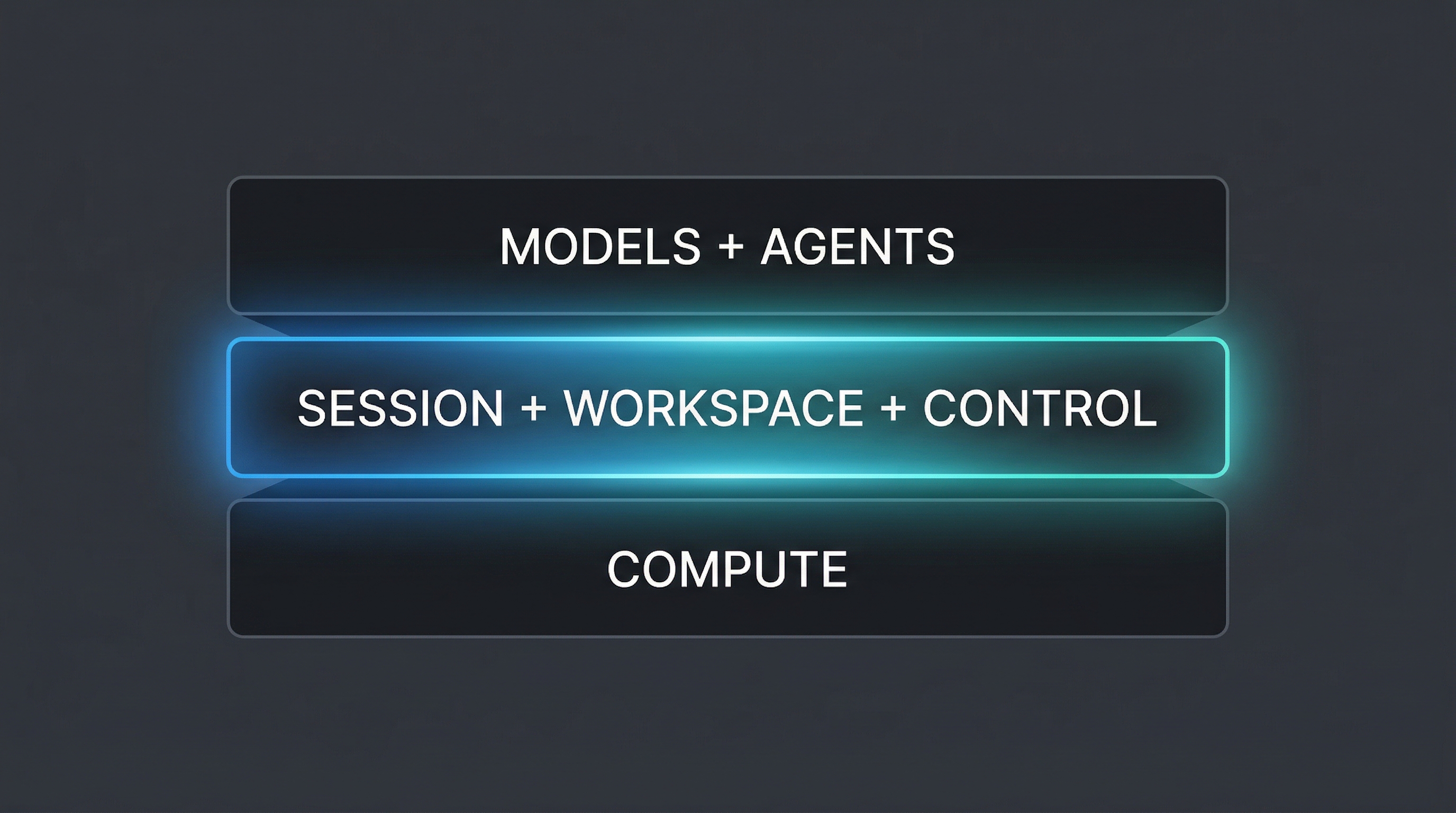

The missing layer

Every team that's serious about AI-assisted engineering ends up building the same infrastructure. Reproducible environments where agents can run safely. Workspaces that keep the task, sessions, artifacts, and approvals together even as environments restart. Credential management so agents can access internal tools without leaking secrets. CI integration so agent work gets verified the same way human work does.

Ramp built Inspect: background coding agents running in full-stack sandboxes wired into GitHub, Slack, Sentry, and Datadog. Within months, agents were producing a significant share of their merged PRs. Stripe built Minions. Every company that gets serious about this builds the same middle layer.

They buy the compute. They use the models. But the layer in between, the environment definitions, the workspace model, the credential vault, the connection fabric, the approval system, they all build from scratch. Every time.

That's what we're building.

What General Volition does

We sell the workspace layer for engineering agents. The environment is where code runs: repos, tools, network access, and scoped credentials. The workspace is where work lives: sessions, tasks, artifacts, approvals, and handoff around those environment runs.

Companies define their environments once, including repos, container images, secrets, tool connections, permissions, and policies, and then attach those environments to workspaces that track the job from first run to final PR. In the early rollout, we embed with your team to make sure both layers are actually right: the environment is wired correctly, and the workspace maps to how your team works.

We ship with a default coding agent, but you can also bring your own. The product is not the agent. The product is the reusable workspace layer underneath, backed by real environments.

Think of it as a cloud laptop for the work: one agent can own it, multiple agents can share it, and a human can jump in whenever needed.

- An environment is the runnable machine: the checked-out repo, the installed tools, the network access, and the scoped credentials needed to do the work.

- A workspace is the durable work object: the task thread, the sessions, the artifacts, the approvals, and the PR lineage around one or more environment runs.

- A workspace can own one environment run or many. One agent can own it, several agents can share it, and a human can jump in whenever needed.

- If an environment dies, the workspace survives. You spawn a fresh environment and keep going.

- Agents run in the cloud, not on someone's laptop. Close your lid, go home, and the work keeps moving.

- Developers use the same workspace as the agents, so they can hand work off to an agent, take it over themselves, or pass it to another human without rebuilding context from scratch.

- You can start with our default agent and bring your own agents over time without rebuilding the workspace layer underneath.

- Sessions are shared. When one engineer discovers a great workflow, that pattern becomes visible to the entire team instead of vanishing inside one person's prompt history.

Why not build it yourself?

Some companies should build this in-house. Ramp did, with a dedicated AI infrastructure team and hundreds of engineers. If you have that kind of team, you probably don't need us.

But most companies don't. Most engineering teams have 20 to 200 engineers and zero dedicated AI infra people. They want the outcome, agents producing verified, reviewable PRs against their real codebase, without spending six months building the plumbing.

That's who we're for.

What we believe

Agents should be pluggable. Models improve every quarter. Harnesses go stale. We don't want to bet the product on one agent being best forever. We want stable interfaces around workspaces, environment runs, and tool execution so better agents can plug in over time.

The session is not the context window. The session is a durable object that survives agent restarts, model swaps, and human takeover. The model's context window is just a temporary view into it. That's what makes long-running work, resumability, and multi-agent collaboration possible.

Background matters more than foreground. We're not building a cloud IDE. We're building the runtime where agents work while you sleep. The human interface for reviewing, approving, and steering matters, but it is not the product.

Trust is earned through traceability. Teams hesitate to merge agent PRs when they can't see the reasoning. Every workspace in General Volition holds the complete audit trail, and every session inside it shows what was attempted and why. Computer-use models can run QA in the current environment run, click through the product, and verify the change the same way a human would. And if you want to check it yourself, you can log into that same test environment and test manually. That's what turns "I hope this is right" into "I can see exactly why this was done."

Where we're headed

Today we're focused on engineering: background coding agents with full-stack access producing verified PRs. But the substrate we're building, reusable environment definitions, durable workspaces, and swappable harnesses, is not specific to code.

The same architecture applies to any long-running agent work. The same problems of credential management, session persistence, traceability, and human oversight show up everywhere. We're starting with engineering because that's where the pain is sharpest and the agents are already most capable. But we're building the foundation for something broader.

Come talk to us

We're looking for engineering teams already using AI coding agents and hitting the ceiling. If your agents can write code but can't verify it, or your team is drowning in unreviewed PRs, we want to hear from you.